I have built a ton of various scrapers, parsers and scripts in the past to get at all kinds of data on the web. I have mostly used PHP, Ruby and more recently Node.js (JavaScript).

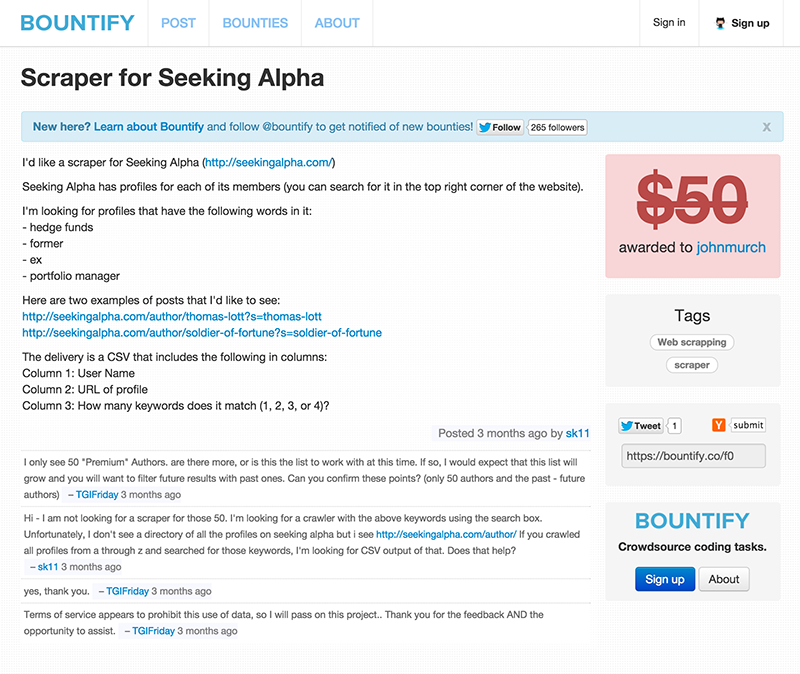

When I saw a post on Bountify for a Scraper for Seeking Alpha I was curious. I wasn’t familiar with Seeking Alpha, but had a free night where my wife was at an event and I was at home watching the dog, so I figured why not give it a try.

After taking Seeking Alpha for a spin I knew I could parse the profiles, but scraping using their search was going to be more work than the bounty offered. Figuring that there was only going to be a couple of developers posting the answer if any, I figured why not do what I can in as little of time as possible.

I simplified the code below but you can download the gist

var fs = require('fs'),

request = require('request'),

async = require('async'),

_ = require('underscore'),

cheerio = require('cheerio');

var outputCSV = [];

//******************************************************

// EACH CATGORY URL (Top 100)

// http://seekingalpha.com/leader-board/opinion-leaders

//******************************************************

var urls = [

"http://seekingalpha.com/opinion-leaders/long-ideas",

"http://seekingalpha.com/opinion-leaders/long-ideas/2",

"http://seekingalpha.com/opinion-leaders/long-ideas/3",

...

"http://seekingalpha.com/opinion-leaders/mutual-funds/3",

"http://seekingalpha.com/opinion-leaders/mutual-funds/4"

]

var authors = [];

async.forEach(urls,function(url,urlcb){

request(url, function(error, response, body) {

if (!error && response.statusCode == 200) {

var $ = cheerio.load(body);

////*[@id="author_articles_wrapper"]/div/div[1]/ul

$('.ld_top_list li a').each(function(i, elm) {

authors.push($(this).attr('href'));

});

$('.ld_more_list li a').each(function(i, elm) {

authors.push($(this).attr('href'));

});

console.log("FETCH: "+ url);

urlcb();

}else{

console.log("Check Connection: Issue viewing: "+ url);

console.log(error);

process.exit(0);

}

});

},function(){

var uniqAuthors = _.uniq(authors);

async.forEach(uniqAuthors,function(name,pcb){

var url = 'http://seekingalpha.com' + name;

request(url, function(error, response, body) {

if (!error && response.statusCode == 200) {

console.log("Fetching: "+url);

var $ = cheerio.load(body);

var author = $("#author_full_name").text();

var profile = $('.profile_item_mini_text').text().toLowerCase();

// console.log(name+"|"+profile);

//Check if keyword is in profile - save as boolean true or false

var boolHedgeFund = contains(profile, 'hedge fund');

var boolFormer = contains(profile, 'former');

var boolEx = contains(profile, 'ex');

var boolPortfolioManager = contains(profile, 'portfolio manager');

var count=0;

var keywords =[];

if(boolHedgeFund){count++;keywords.push('hedge fund')}

if(boolFormer){count++;keywords.push('former')}

if(boolEx){count++;keywords.push('ex')}

if(boolPortfolioManager){count++;keywords.push('portfolio manager')}

var capture = {

author:author,

url:url,

keywords:keywords,

// profile: profile,

count:count

}

if(count>0){

outputCSV.push(capture);

pcb();

}else{

pcb();

}

}else{

pcb();

}

});

},function(){

console.log("DONE");

var wstream = fs.createWriteStream('results.csv',{'flags': 'w'});

wstream.write("Author,URL,Count,Keywords\n");

async.forEach(outputCSV,function(a,acb){

wstream.write(

JSON.stringify(a.author)

+","+a.url+","

+a.count+","

+JSON.stringify(a.keywords.join())

+"\n"

);

},function(){

wstream.end();

console.log("DONE");

process.exit(0);

});

})

})

function contains(r,s){

return r.indexOf(s) !== -1;

}